What is Face Recognition – How Does it Work?

March 14, 2023, 6 min read

There is a comparison between the data and a library of recognised faces. Identity verification by face recognition has the potential to improve security but also has ethical concerns.

Forecasts place the value of the facial recognition industry at $7.7 billion by 2022, up from $4 billion in 2017. That’s because there are numerous market uses for biometric face recognition & authentification technology. It has a wide range of potential applications, from security to advertising.

It gets tricky, though, when you consider that. If you value your privacy, you may want to have some say over what happens to the data that identifies you. Furthermore, your “online face recognition” is in fact information.

What is Face Recognition?

One approach to verify or verify one’s identification is using facial recognition. Individuals in still images, moving films, or in real-time can all be identified with the help of facial recognition technology.

The field of biometrics includes face recognition technology for added safety. Voice recognition, fingerprint scanning, and retina/iris scanning are all examples of biometric software. Aside from security and law enforcement, technology is starting to get some attention in other fields.

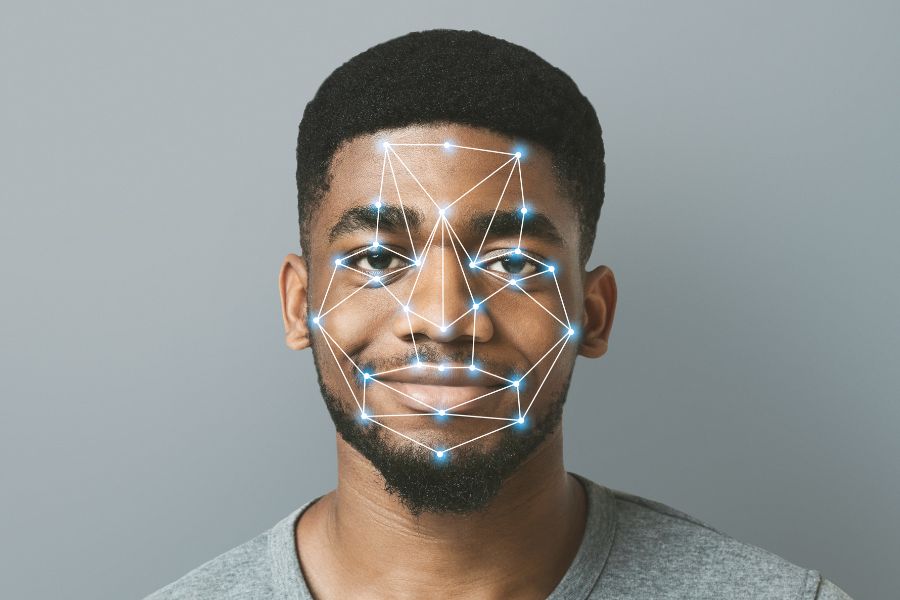

How does Face Recognition Work?

FaceID, Apple’s face recognition ai system used to unlock iPhones, has brought the technology to the mainstream (however, this is only one application of face recognition). Facial recognition typically doesn’t need a huge image database to verify a person’s identification; instead, it just confirms that one person is the rightful owner of a device and prevents unauthorised users from accessing it.

face recognition cameras may be used for a lot more than just unlocking your phone; it can also be used to keep tabs on people by comparing their likenesses with photographs of wanted criminals captured by specially designed cameras. Images on the watch lists might originate from anywhere, including our social media accounts, and can feature anyone, including those not suspected of wrongdoing. Although there is some variety in how face recognition software functions, typically they are used in the following ways:

1. Identification of faces

Face recognition app and camera can identify and locate a single face or a group of faces in a photo. Depending on the angle of the photograph, the subject may be gazing directly at the camera or in profile.

2. Analyzing the face

After that, a facial image is taken and examined. As it is easier to compare a 2D image to one already in a public database or on the internet, most facial recognition technology uses 2D images instead of 3D. A scan of your face’s geometry is read by the programme. The width of your nose, the length of your forehead, the height of your cheekbones, and the form of your chin and lips are also important. Finding your own facial characteristics is the goal.

3. Transforming the image into data

A person’s facial features are used in the face capture process to convert analogue information (a face) into a digital collection of information (data). The examination of your face is reduced to a formula. A faceprint is a term used to describe numerical code. Each individual has a distinctive faceprint, just like their fingerprint.

4. Matching

The next step is to check your faceprint against a library of other people’s images. The FBI, for instance, can search through as many as 650 million images from different state databases. Any photo that is “tagged” with a user’s name is stored in Facebook’s database and can be used for facial recognition if the user permits it. The decision is determined based on whether or not your faceprint is similar to an image in a facial recognition database.

Facial recognition is widely regarded as the most intuitive biometric assessment method. This makes intuitive sense given that facial recognition is far more common than thumbprints or iris scans in everyday life. Over half of the world’s population may have their lives routinely affected by facial recognition technology.

How facial recognition has evolved over time?

The origins of facial recognition can be found in the ’60s. Woodrow Wilson Bledsoe, a mathematician and computer scientist, created the first metric system that could be used to categorise facial images. Bledsoe is sometimes credited as the “unofficial father of facial recognition technology” due to his contributions in this area.

Eventually, governmental bodies took notice of Bledsoe’s findings and began to inquire about his research. Additionally, some government agencies created their very own facial recognition systems between the 1970s and the 1990s. They were rudimentary in comparison to current technology, but their development paved the path for sophisticated facial recognition software.

The year 2001 has been cited by many as a turning point for facial recognition software. When Super Bowl XXXV security personnel needed help identifying attendees, they turned to facial recognition technology. The Pinellas County Sheriff’s Office, located in Florida, also developed its own facial recognition database that year.

Facial recognition wasn’t widely used until the 2010s, when computers became powerful enough to do the job efficiently. In truth, Osama bin Laden’s identity was verified in 2011 by facial recognition software. In 2015, after Freddie Gray died from a spinal injury sustained in a police van, protesters in Baltimore were identified using facial recognition technology.

Consumers now routinely employ facial recognition technology on their smartphones and other portable electronic gadgets. In 2015, users could unlock their devices by pointing them at their faces with Windows Hello and Android’s Trusted Face. 2017 saw the introduction of Apple’s Face ID facial recognition technology on the iPhone X.

This technology has been the subject of debate since some people feel that it violates their privacy. San Francisco, Oakland, and Boston are just a few of the cities that have outlawed the use of facial recognition by local authorities. A number of major technology companies, including Amazon, Microsoft, and IBM, have stated that they will no longer sell their face recognition technology to law enforcement agencies in the wake of Black Lives Matter protests against police brutality in the summer of 2020.

How precise is the technology that recognises faces?

Skeptics argue that inaccurate identifications may occur as a result of using face recognition. What if, for example, a store window is broken during a riot and the police use face recognition technology to identify the perpetrator, but the person they identify was actually miles away? In what percentage of cases may this occur?

That is debatable. According to NIST’s tests conducted in April of 2020, the most accurate facial recognition algorithm has an error rate of just 0.08%. The best algorithm back in 2014 had an error rate of 4.1%, so this is a significant improvement.

According to a report by the Center for Strategic and International Studies (CSI) in the year 2020, however, accuracy increases when identification algorithms are used to match people to clear, static images like a passport photo or mugshot. According to the article, when applied in this manner, facial recognition algorithms can achieve accuracy scores of up to 99.97% on the Facial Recognition Vendor Test administered by the National Institute of Standards and Technology.

However, in practise, accuracy tends to be lower. In the CSI report, it is stated that the Facial Recognition Vendor Test discovered that one algorithm’s mistake rate increased from 0.1% when matching faces against high-quality mugshots to 9.3% when matching faces to photographs of individuals recorded in public. The incidence of mistakes increased significantly when the subjects were either not looking directly at the camera or were partially obscured by background clutter.

A further difficulty is that of ageing. The Facial Recognition Vendor Test found that when matching images of participants shot 18 years ago, the error rate for middle-tier facial recognition algorithms increased by approximately a factor of 10.